The Question

The King Wen sequence is the traditional ordering of the 64 hexagrams in the I-Ching, attributed to King Wen of Zhou around 1050 BC. For three millennia, this ordering has been studied as a map of change — each hexagram flowing into the next through a logic that resists simple characterization.

In 2025, an analysis of the sequence's mathematical properties revealed something unexpected. The transitions between hexagrams exhibit high variance and negative lag-1 autocorrelation — a large change tends to be followed by a small one, so the sequence never settles into a predictable rhythm. In information theory, this resembles an anti-habituation signal: a pattern that resists the observer falling into predictable routines.

This raised a provocative question: could these properties, refined over three millennia of human interpretation, improve the training of artificial neural networks? Modern AI training follows carefully designed schedules — learning rates that warm up, hold steady, and decay. What if the King Wen sequence encoded a better schedule?

Experiment 1: The Learning Rate Schedule

The first test was direct. In neural network training, the learning rate controls how aggressively the model updates its understanding with each batch of data. Too fast and the model overshoots; too slow and it stagnates. Conventional schedules use smooth curves — a gradual warmup, a steady period, then a gentle decay.

The King Wen schedule replaced this smooth curve with the sequence's surprise profile: high-surprise transitions produced aggressive learning, low-surprise transitions produced cautious learning. The anti-habituation property would, in theory, prevent the optimizer from settling into unproductive routines.

Six configurations were tested against a standard baseline, varying the strength of the King Wen modulation. The results were unambiguous: every King Wen configuration performed worse than the baseline. The model trained with the standard smooth schedule consistently learned more effectively.

The strongest modulation degraded performance by 2% — modest in absolute terms, but in a controlled experiment where the only variable is the schedule, this is a clear signal. The King Wen sequence's anti-habituation property, far from preventing unproductive routines, was actively disrupting productive ones.

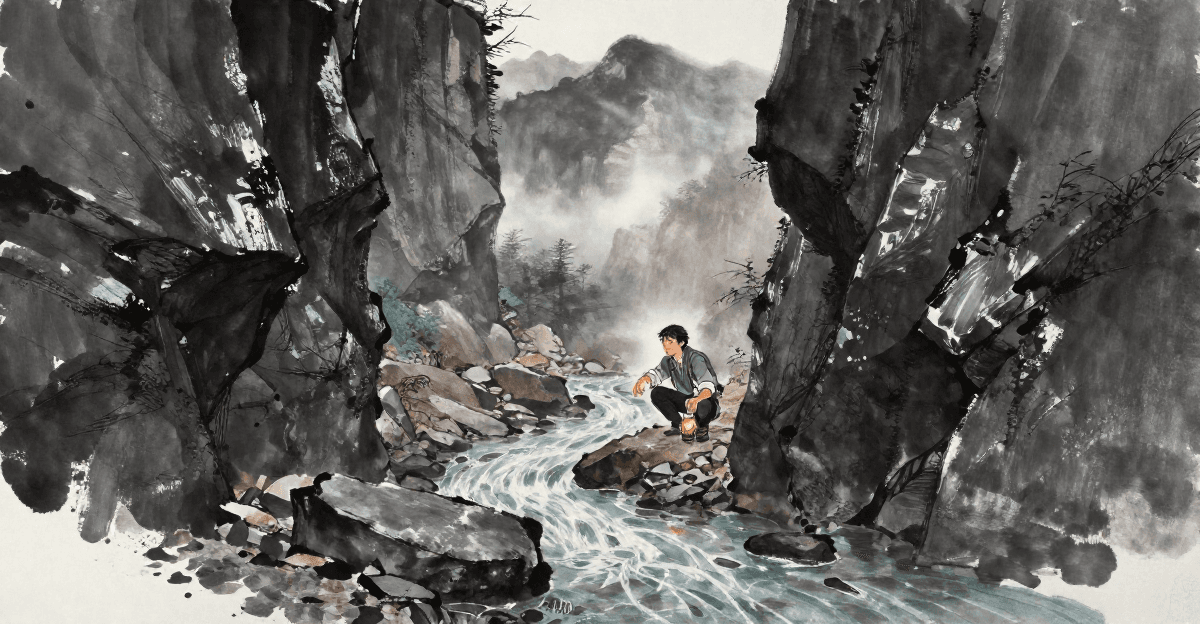

天行,健。君子以自強不息。

— 易經・乾・象傳

Heaven's movement is ceaseless. The noble one matches this through continuous self-strengthening.

The first hexagram's image commentary describes unceasing, steady effort — precisely the kind of smooth, sustained optimization that gradient descent requires. The King Wen sequence's anti-habituation profile works against this principle: it disrupts continuity rather than sustaining it.

Experiment 2: The Curriculum

The second test took a different approach. Instead of modulating how fast the model learns, it changed what the model learns and when.

In education, curriculum design matters. Teaching arithmetic before calculus is more effective than the reverse. Recent research in AI training has confirmed the same principle: the order in which data is presented affects what the model ultimately learns.

The King Wen sequence was used to reorder training batches by difficulty. Each batch of data was scored by its complexity, then presented in the order dictated by the King Wen surprise profile — harder material at high-surprise positions, easier material at low-surprise positions. Five alternative orderings served as controls: sequential (no reordering), random shuffle, easy-to-hard, hard-to-easy, and a simple sawtooth pattern.

The results were surprising, but not in the way hoped. On one machine, random shuffling produced the best results — better than any structured ordering, including King Wen. King Wen was the worst-performing non-sequential ordering. On a second machine running different software, no ordering produced any measurable effect at all.

蒙:亨。匪我求童蒙,童蒙求我。初筮告,再三瀆,瀆則不告。利貞。

— 易經・蒙・彖

Youthful Learning: success. It is not I who seek the young learner; the young learner seeks me. At the first inquiry, I give instruction. If asked two or three times, that is importunity — and to the importunate I give no instruction.

Hexagram 4 prescribes a specific pedagogy: the student must come ready. Repetitive, forced exposure degrades learning. Yet the curriculum experiment found that forced ordering — including King Wen's — added nothing beyond what random exposure provided. The ancient text warns against exactly the kind of imposed structure the experiment tried.

What Went Wrong — Or Did It?

Two experiments, two negative results. The King Wen sequence does not improve neural network training, whether applied as a learning rate schedule or a curriculum ordering.

But negative results are not failures. They are boundaries. These experiments established, with rigorous controls and statistical analysis, that the King Wen sequence's properties — high variance and negative lag-1 autocorrelation — are detrimental to gradient-based optimization. The anti-habituation signal that might benefit a human learner actively destabilizes the mathematical process by which neural networks learn.

This is not a surprising result, in retrospect. Gradient descent is a continuous optimization process that benefits from smooth, predictable updates. Introducing unpredictability into this process is like shaking a table while someone is trying to thread a needle. The hand needs to be steady, not surprising.

The question was never whether the King Wen sequence contains structure — the mathematical analysis confirms it does. The question is whether that structure is useful in a given domain. For continuous optimization, the answer is no. But domains exist where unpredictability is not a liability but a strategic advantage. The subsequent experiments in this series explore that possibility.

地勢,坤。君子以厚德載物。

— 易經・坤・象傳

Earth's tendency is receptive. The noble one matches this through depth of character, bearing all things.

The second hexagram counsels receptivity — accepting results as they are rather than forcing them to match expectations. A negative result, received with intellectual honesty, strengthens the foundation for future inquiry. The ground must be understood before anything can be built upon it.