The Nature-Nurture Debate, 2300 Years Later

After two experiments showed the King Wen sequence did not improve AI training, the question shifted from the sequence itself to the conditions surrounding it. Perhaps the problem was not the curriculum but the student.

The Junzi Alignment hypothesis proposes that an AI model's disposition — its tendency toward certain behaviors — is shaped by two factors: its random initialization (the 'seed') and its training curriculum. This mirrors one of China's oldest philosophical debates.

Mengzi argued that moral tendencies are innate — present from birth like sprouts waiting to grow.

惻隱之心,仁之端也;羞惡之心,義之端也;辭讓之心,禮之端也;是非之心,智之端也。人之有是四端也,猶其有四體也。

— 孟子・公孫丑上

The heart of compassion is the sprout of benevolence; the heart of shame is the sprout of righteous conduct; the heart of deference is the sprout of propriety; the heart of right and wrong is the sprout of wisdom. That people have these four sprouts is like their having four limbs.

Mengzi's 'four sprouts' theory predicts that different seeds should produce different moral dispositions — just as the Junzi hypothesis predicts that different random initializations should produce different behavioral dispositions in neural networks.

Xunzi took the opposite view. Human nature is raw material; only sustained cultivation produces virtue.

青、取之於藍,而青於藍;冰、水為之,而寒於水。…乾、越、夷、貉之子,生而同聲,長而異俗,教使之然也。

— 荀子・勸學

Blue dye extracted from indigo surpasses indigo; ice formed from water is colder than water. Children of Gan, Yue, Yi, and Mo are born with identical cries but grow up with entirely different customs — education makes them so.

Xunzi's position predicts that the training data (curriculum) overwhelms any innate disposition from the seed. If this is correct, changing the random initialization should have negligible effect on the trained model's behavior.

A sweep of 30 random seeds was run on the same training setup. Each seed produces a different random initialization — a different starting point for the same learning process. If Mengzi is right, different seeds should produce measurably different behavioral signatures. If Xunzi is right, they should all converge to approximately the same result.

Xunzi won. The variation between seeds was approximately 0.04 on the evaluation metric — small enough that it could not be distinguished from measurement noise. No 'behavioral personalities' emerged. No seed produced a distinctly different kind of model. The starting point is effectively irrelevant; the training process dominates.

For the King Wen hypothesis, this meant that any curriculum effect would need to exceed 0.04 to be distinguishable from the background noise of seed variation — a threshold that none of the subsequent experiments cleared.

The Platform Mystery

With the seed sensitivity question settled, attention returned to the curriculum experiments — but from a new angle. The first curriculum test had been run on one machine. What would happen on a different one?

The same six ordering conditions — sequential, random shuffle, easy-to-hard, hard-to-easy, King Wen, and a control — were run on two machines. The first was a desktop PC with an NVIDIA graphics card, using PyTorch, the most common AI training framework. The second was a laptop with an Apple processor, using MLX, Apple's own framework.

The results were startling. On the NVIDIA machine, every alternative ordering beat the sequential baseline — random shuffling by roughly three times the seed noise floor. The effect reproduced consistently, which made the next result all the more puzzling.

On the Apple machine: nothing. No ordering produced any improvement outside the noise floor. The same experiment, the same data, the same model architecture, the same evaluation — and completely different results.

Both machines were then tested with a second difficulty metric to rule out measurement artifacts. The platform gap persisted. The effect was not about how difficulty was measured. It was about the platform itself.

The Discovery Nobody Expected

The explanation turned out to have nothing to do with the I-Ching.

The NVIDIA machine used a technology called torch.compile — a system that compiles the model into specialized, high-speed routines tuned to a fixed computation and memory layout. That specialization was the culprit, but not in the way it first appeared.

To present data in a chosen order, the reordering code buffered batches and cloned them into fresh GPU tensors. This broke the memory layout torch.compile had assumed — and a control condition gave it away. A 'pass-through' run that buffered and cloned the batches but left their order unchanged degraded training just as badly as the reordered runs. Same data order, same damage. The interaction was with the buffering and cloning, not with the ordering of the content — and certainly not with the King Wen sequence. The first version was severe enough to count as an implementation bug; switching to pinned CPU memory with a single reusable GPU tensor eliminated the torch.compile interaction entirely.

On the Apple machine there is no equivalent compilation step, so buffering and cloning cost nothing and 'reordering' showed no effect. Once the layout bug was fixed on the NVIDIA side, the remaining differences between orderings — including random shuffle — fell within the seed-noise floor. What had looked like a curriculum effect was an artifact of how the data was fed to a compiled model.

This was the unexpected lesson — a platform's compilation strategy can interact with the data-loading path to manufacture phantom curriculum effects. It has nothing to do with ancient Chinese sequences and everything to do with the invisible optimization layers built into modern AI tools.

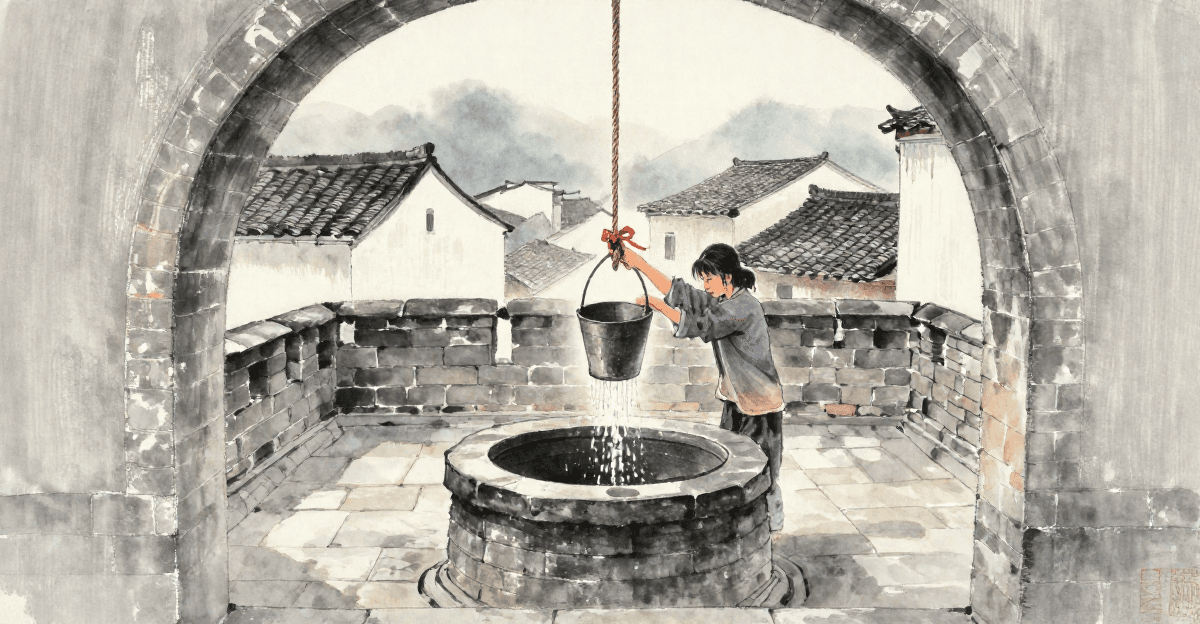

井渫不食,為我心惻。可用汲,王明,並受其福。

— 易經・井・九三

The well has been dredged clean but no one drinks from it. This makes my heart ache. It could be drawn from — if the ruler were wise, all would share in its blessing.

The curriculum experiments dredged the well of data ordering and found it empty for the King Wen hypothesis. But the process of dredging revealed something else entirely — a hidden interaction between platform optimization and data structure that had not been documented before. The well was empty of what was sought, but contained something unexpected.

One Last Attempt

Before abandoning the curriculum hypothesis entirely, one more approach was tested. All previous experiments used fixed orderings — the same sequence applied uniformly throughout training. What if the model itself chose which data to learn from?

An adaptive algorithm was implemented using a technique from decision theory called UCB1 (Upper Confidence Bound). The training data was divided into eight difficulty levels. As the model trained, the algorithm tracked which difficulty level produced the most learning progress. It then directed attention toward the levels where the model was improving fastest — a kind of self-directed curriculum.

The result: the adaptive approach performed identically to random shuffling. Both were marginally better than sequential ordering, but the margin was within the noise floor. The model gained nothing from being allowed to choose its own curriculum.

This was the final piece of evidence. Across all experiments — fixed orderings, different difficulty metrics, different platforms, and now adaptive selection — the dominant effect was always the same: any disruption of the sequential data pattern produced a small, consistent benefit. The specific ordering of that disruption — whether random, structured, King Wen, or self-selected — made no measurable difference.

蓬生麻中,不扶而直;白沙在涅,與之俱黑。…其質非不美也,所漸者然也。

— 荀子・勸學

Mugwort growing among hemp stands straight without support; white sand placed in black mud becomes black with it. Its quality is not unworthy — it is what it steeps in that determines the outcome.

Xunzi's environmental determinism proved right at this scale. The 'environment' of the training process — the framework, the hardware, the data packing — mattered far more than any ordering imposed upon it. The mugwort grows straight not because of its innate nature or the farmer's planting sequence, but because the hemp around it holds it upright.

What the Well Contains

Three attempts to rescue the King Wen curriculum hypothesis — through seed sensitivity, cross-platform replication, and adaptive selection — produced three negative results for the original question and one genuinely unexpected discovery.

The seed sensitivity experiment confirmed Xunzi over Mengzi at this scale: the starting conditions of a small neural network do not produce meaningful behavioral differentiation. Training overwhelms initialization.

The cross-platform comparison revealed that what appeared to be a curriculum effect on one machine was actually an artifact of platform-level optimization — a finding about how modern AI tools interact with data structure that has implications well beyond this research.

The adaptive curriculum demonstrated that even when the model is allowed to direct its own learning, it gains nothing beyond simple decorrelation of the sequential data pattern.

The well has been thoroughly dredged. In the domain of continuous optimization — training neural networks on text data — the King Wen sequence has no effect. But the research established something valuable: a clear boundary. The sequence's properties are not universally useless. They are specifically unsuited to gradient descent. In a different domain, where unpredictability is an advantage rather than a disruption, those same properties might find their natural application.